Prerequisites

To complete this quickstart, you must possess the following:- An active AIsa account.

- A valid API key generated from the AIsa dashboard.

- A foundational understanding of OpenAI-style Chat Completions APIs.

Step 1: Account Creation and API Key Generation

The first step requires establishing an identity on the platform and generating a secure authentication token.- Navigate to the AIsa registration portal and create an account. The platform supports standard email registration, which requires verifying your email address before setting a password, as well as Single Sign-On (SSO) via Google OAuth and GitHub OAuth.

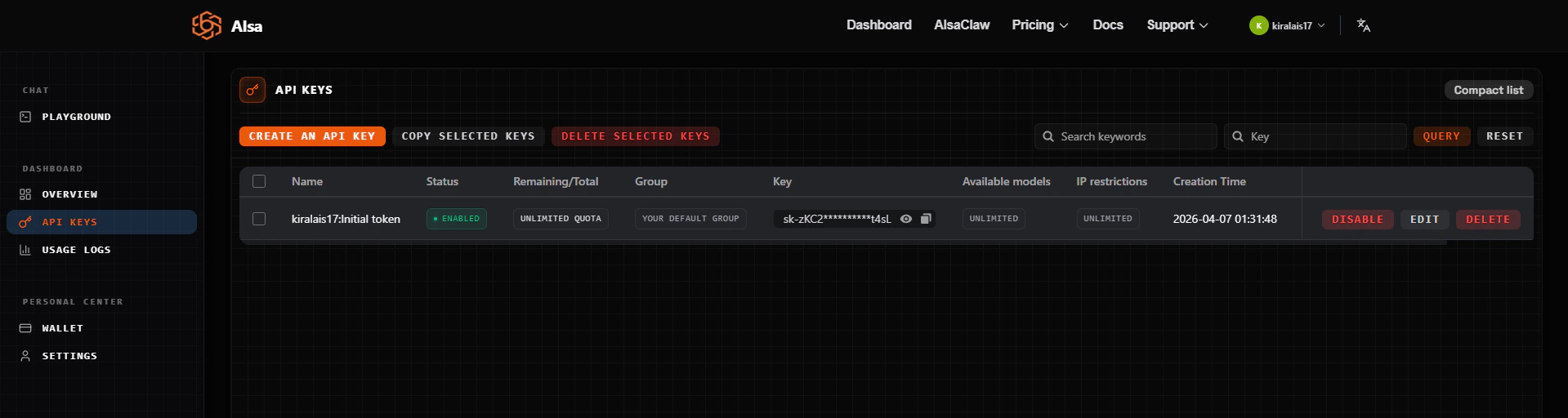

- Upon successful registration, you are directed to the API Keys page, where a key has already been generated for you. Copy and store this key securely, it authenticates all requests and is tied to your usage and billing profile.

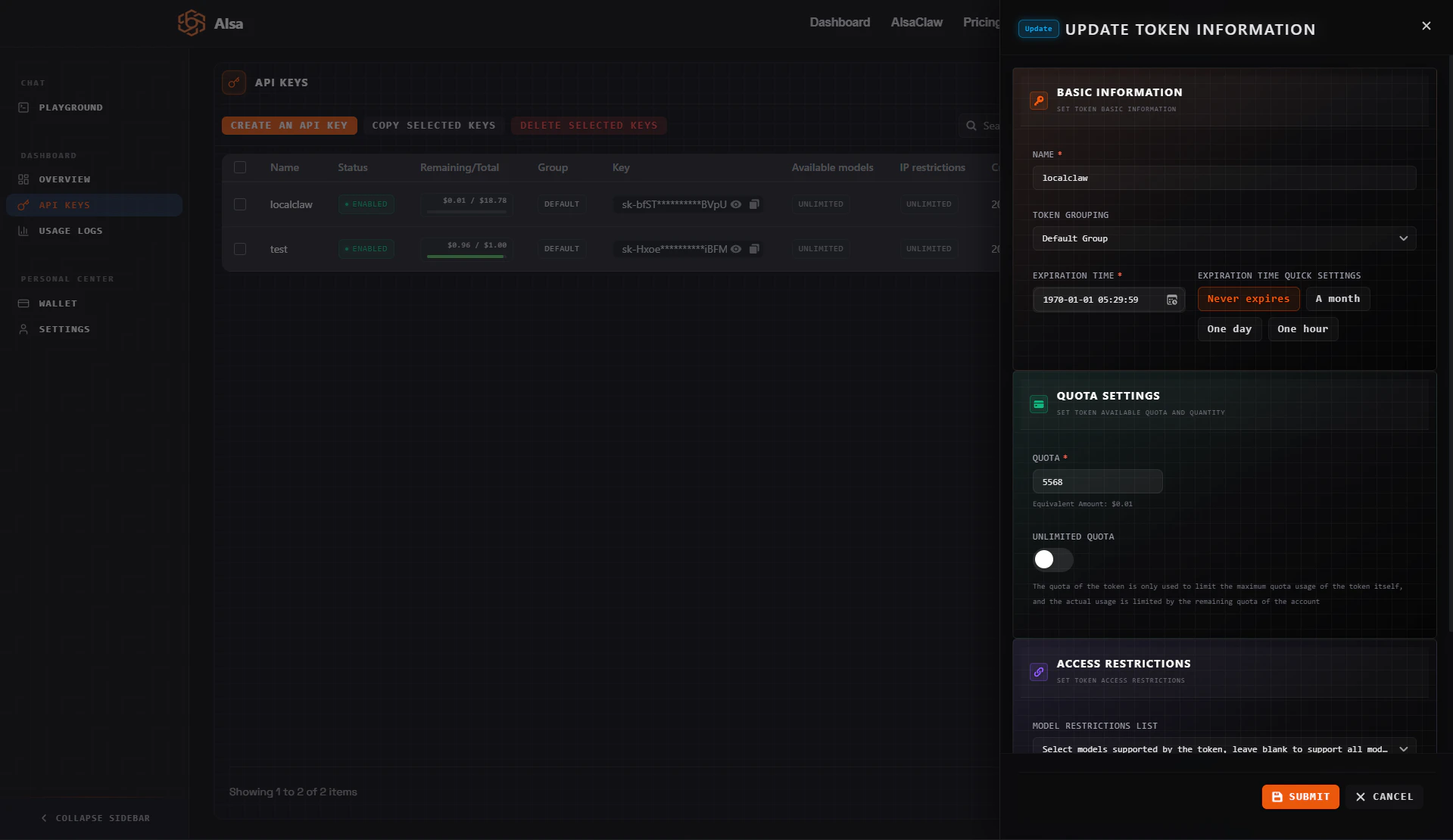

- To create additional API keys or set spending limits, return to the API Keys page at any time. When creating/editing a key, you can assign it a quota, the maximum amount that key is permitted to spend.

Note: A key’s quota is a spending cap on that specific key, not your total funds. Your credit balance is the overall amount available in your account wallet; a key can only spend up to whichever is lower, its quota or your remaining balance. This is useful when sharing keys across projects or team members, as it prevents any single key from consuming your entire balance. You can also remove this quota by enabling unlimited quota for a particular API key.

Step 2: Executing Your First API Call

AIsa exposes a single API endpoint (https://api.aisa.one/v1) that dynamically routes requests to multiple providers based on the specified model parameter.

Because the AIsa gateway is fully compliant with OpenAI’s Chat Completions API specification, developers only need to modify three variables in their existing codebases:

base_url: Must point to the AIsa endpoint.api_key: Must be your generated AIsa token.model: Must be a valid model identifier supported by AIsa.

Example: REST API Request via cURL

The following example demonstrates a standard HTTP POST request to the completions endpoint.choices array, the generated message, and detailed token usage metrics for billing transparency.

Step 3: Integrating with Official SDKs

Due to its strict API compatibility, AIsa integrates natively with official OpenAI SDKs, eliminating the need for custom libraries.Python SDK Integration

To utilize AIsa via Python, install theopenai package and instantiate the client with your AIsa credentials.

TypeScript SDK Integration

Similarly, Node.js environments can leverage the officialopenai NPM package.